Hyper-V has been gaining momentum and with Hyper-V 2012 supporting SMB 3.0 based NAS storage, this momentum is likely to accelerate. And of course, any commercial deployment needs to have a proper backup policy. This blog examines some simple, but often overlooked problems in Hyper-V backup, which of course, all backup vendors have tackled in some way or the other.

In general, there are two ways to do a backup with VMs

- Backup from within a VM

- Backup from the hypervisor aka Hyper-V parent partition

In some particular cases, only of these choices is feasible. Figure 1 shows a VM that uses an iSCSI LUN that is passed through directly to the VM.

After the advent of the VHDX file format and the associated performance and robustness improvements, there are even less reasons to use the configuration depicted in Figure 1. However, in this configuration, the only way to backup is by running a backup application within the VM. The speed boost you get by eliminating the NTFS stack within the parent partition is marginal, and live migration of a VM with this configuration involves a LUN transfer when the iSCSI volume needs to be moved to a different Hyper-V host.

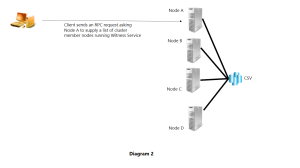

Figure 2 shows a more typical configuration with a VM using a VHD(x) file (VHD or VHDX). Figure 2 shows this VM being backed up from within the VM, even though other choices exist. The main drawback here is that if the Hyper-V host were running 20 such VMs, one would have to pay the cost of 20 Backup App licenses.

Figure 3 shows a VM again using a VHD(x) file, but backup being performed from the Hyper-V parent partition. This is a popular configuration since the cost of the Backup App can be amortized over all the VMs being hosted. The BackUp App depends upon the VSS infrastructure Microsoft has created that runs in both the Hyper-V parent partition and inside the VM. Of course, if the VM is running an OS where no VSS IC requestor exists, this configuration is not feasible.

Once a snapshot is created and a fullback is done, the full backup will include a complete copy of the VHD(x) file. Given that the VHD(x) file will be at least 10s of GBs as in ranging anywhere from 20GB to 100GB or more, it is highly desirable that the subsequent backups be differential backups which only backup changed data within the VHD(x) file.

And that is where the problem lies. None of Windows 2012, Windows 2012 R2, or Hyper-V 2012 provide a facility to determine the changed blocks within the VHD(x) file.

An ideal solution would install in the Hyper-V parent partition and would install, uninstall, load, unload without requiring a reboot of the Hyper-V parent partition. The VMs would have to be restarted for the change tracking to work. This is shown in Figure 5.

Any ISV or OEM looking for such a generic solution is encourage to contact me via LinkedIn.